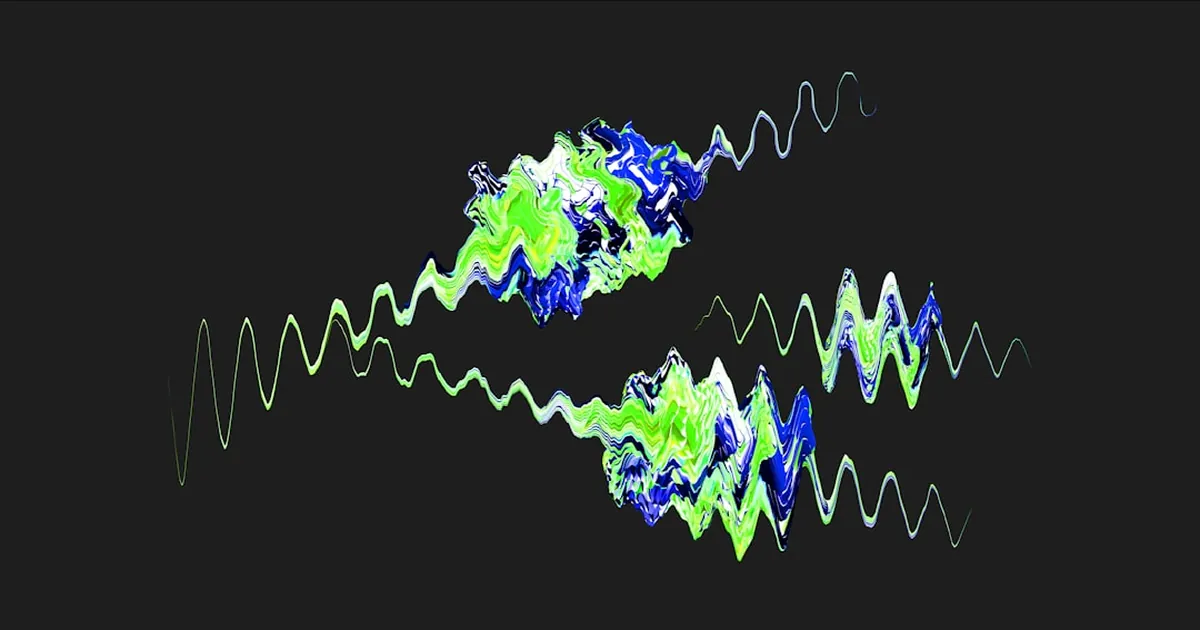

Every mix decision you make is filtered through a system that actively discards audio information. Your cochlea doesn't send a faithful representation of incoming sound to your brain — it sends a compressed, processed summary, shaped by rules that have been hardwired into human hearing for millions of years. Those rules have a name: auditory masking. And they're more specific, and more useful, than most audio education lets on.

The Cochlea Doesn't See Frequencies — It Sees Bands

The basilar membrane inside your cochlea vibrates in response to sound. Different regions respond to different frequencies, with high frequencies exciting the base and low frequencies exciting the apex. So far, so textbook.

What's less commonly taught: the cochlea doesn't process every frequency independently. It groups nearby frequencies into critical bands — approximately 24 of them, covering 20 Hz to 15.5 kHz. The Bark scale, proposed by Eberhard Zwicker in 1961, formalizes these boundaries.

Here's what makes this non-obvious: the bands are not equal in size. At low frequencies, a critical band might span only 100 Hz. At 8 kHz, a single critical band can be over 1,000 Hz wide. The scale is logarithmic below 500 Hz, transitioning to more linear above.

Practical consequence: Two sounds competing at 200 Hz and 240 Hz are in the same critical band. The same frequency distance — 40 Hz — at 8 kHz puts you across two different bands entirely. Proximity in Hz is not the same as proximity in perception. This is why low-frequency mud is so persistent: small frequency distances cause massive masking interactions that a linear EQ view doesn't reveal.

Simultaneous Masking: Why Loud Things Erase Quiet Things

When a loud sound and a quiet sound occupy the same critical band at the same time, the quiet sound may simply become inaudible — masked completely. This is simultaneous masking.

The mechanism is mechanical: the basilar membrane responds to the louder vibration, and the smaller vibration gets lost in the noise floor that the dominant signal creates within that band. The cochlear "noise floor" raised by one sound renders nearby sounds imperceptible.

The surprising part is upward spread of masking: a loud low-frequency signal doesn't just mask frequencies within its own critical band — it disproportionately masks higher frequencies too. This asymmetry is caused by the nonlinear compression of the basilar membrane. At low sound levels, masking is roughly symmetric. Above ~40 dB SPL, the masking pattern tilts upward, and the tilt grows steeper with increasing level.

In mixing terms: a kick drum at 80 Hz running hot doesn't just compete with your bass guitar in the sub-bass region. It's actively suppressing the energy your bass guitar needs to sit in the 200–400 Hz range, and potentially smearing into the lower-mid presence of your vocals. The fact that they're not spectrally overlapping doesn't mean they're not interacting. Upward spread of masking is why "cleaning up the low end" so reliably clears the midrange — you're not just cutting mud, you're reducing the masking pressure that was being applied across multiple octaves above it.

Temporal Masking: Sound in Time Has a Blindspot

Masking doesn't only happen between simultaneous sounds. Your auditory system continues masking for a period after a loud sound ends — and, more surprisingly, can mask sounds that came slightly before the loud event.

Forward masking: After a loud sound stops, nearby quieter sounds remain masked for up to ~200 ms. The effect is strongest in the first 30 ms and decays nonlinearly. Neural recovery in cochlear processing units takes approximately 250 ms after a masker offset.

Backward masking: A loud sound can mask a quieter signal that preceded it by up to 25 ms. This sounds impossible — how does a future event suppress perception of a past one? The answer is that auditory perception isn't truly real-time. The brain integrates sound over short windows, and a high-intensity event that arrives within ~25 ms can retroactively suppress the detection of a lower-intensity event that came just before it.

For producers, this matters in transient design. A snare hit that's extremely bright and sharp will backward-mask elements in the preceding 20 ms — including your hi-hat tail. If you're wondering why your hi-hat disappears on a busy beat even though it's spectrally separate from the snare, temporal masking is likely the culprit. Forward masking explains why the bass note following a hard kick transient needs more time or energy to register clearly: the kick is still masking the window in which the bass attacks.

Why MP3 Knows What You Can't Hear

MPEG Layer III (MP3) and its successor AAC implement a psychoacoustic model as their core compression strategy. The encoder analyzes the incoming signal, identifies the masking thresholds in each critical band for each time window, and then allocates bits only to information that exceeds the masked threshold. Content below the masking threshold gets zero bits — it's inaudible anyway.

This is why a good 192 kbps MP3 sounds transparent to most listeners on most material: the codec is discarding exactly the information your cochlea was already going to discard. Artifacts at low bitrates (below ~128 kbps) appear when the encoder's psychoacoustic model makes bad predictions — most commonly on transients, where temporal masking windows are too short for the model to stay accurate, or on high-frequency content where the critical bands are wider and harder to model precisely.

The Missing Fundamental: Perception Constructing What Isn't There

One more effect the critical band model predicts: the missing fundamental. A tone with harmonics at 200, 300, and 400 Hz is heard as having a pitch of 100 Hz — even though there is no physical energy at 100 Hz. The pattern of harmonics implies a fundamental that your auditory system reconstructs.

This has a direct engineering implication. Small speakers and earbuds incapable of reproducing 80 Hz bass can still give a bass impression because the harmonics of a bass guitar or kick drum are present and your brain fills in the fundamental. This is why careful saturation or harmonic excitement on the bass bus can improve bass perception on laptop speakers — you're not restoring the missing low frequency, you're giving the perceptual system enough harmonic content to infer it.

Applying This

Three things to carry into your next session:

1. EQ in Bark, not Hz. When sounds compete below 500 Hz, a 100 Hz difference can place them in the same critical band. When they compete above 4 kHz, a 1,000 Hz difference might still keep them within the same band. Use your ears logarithmically: small cuts mean more down low.

2. Level drives masking. Upward spread of masking increases nonlinearly with SPL. A compressed bass that sits 3 dB hotter than uncompressed will mask proportionally more of the midrange. Dynamics processing changes masking relationships, not just loudness.

3. Transient spacing matters psychoacoustically. Elements hitting within 20–30 ms of each other don't just overlap in time — they interact through temporal masking. If two attacks land within this window, one may become perceptually invisible regardless of frequency content or level.

The cochlea is running a lossy compression algorithm on everything you hear. The question is whether your mix works with that algorithm or against it.