When you drag a drum loop to half tempo in Ableton Live, something mathematically interesting happens before you hear the result. The audio does not simply get slowed down. It gets disassembled into hundreds of overlapping frequency snapshots, each one carefully stitched back together at a new position in time. The algorithm doing that stitching is the phase vocoder, and it has been the backbone of nearly every time-stretch implementation since the late 1960s.

Understanding it does not just satisfy curiosity. It explains why your snares smear when you stretch too far, why some DAWs handle transients better than others, and what the tradeoff is every time you choose an algorithm.

The Core Idea: Treat Audio as Overlapping Spectra

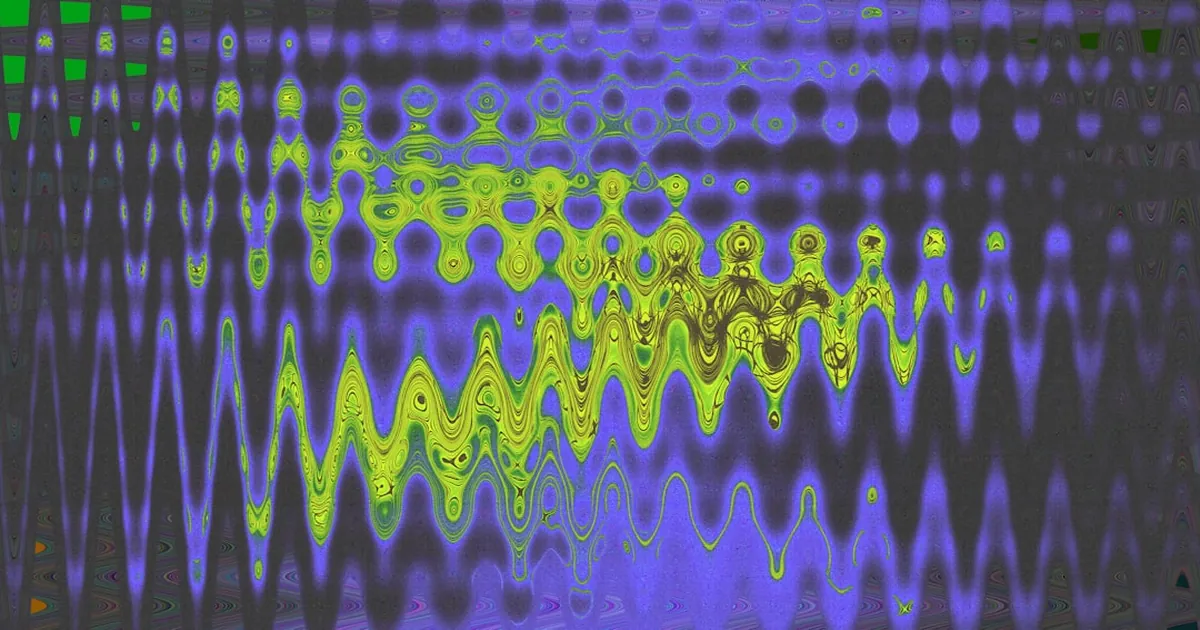

The phase vocoder works on the short-time Fourier transform (STFT). Instead of computing one FFT over the entire signal, you compute thousands of them on short, overlapping windows, each window capturing a 20-50ms slice of audio with a smooth taper applied (typically a Hann window) to reduce spectral leakage at the edges.

Each FFT frame gives you a set of frequency bins, typically 512 to 4096 bins wide. Each bin has two values: a magnitude and a phase. Together these encode how much of a given frequency is present, and where in its cycle that frequency component currently sits.

To reconstruct the audio, you invert each FFT frame back to the time domain and overlap-add the results, each frame offset by a fixed number of samples called the synthesis hop size. If every frame is placed back exactly where it came from, you get the original signal. The COLA constraint (constant overlap-add) guarantees perfect reconstruction as long as your window function and hop size are compatible.

Time Stretching: One Hop Size for Analysis, Another for Synthesis

Here is where it gets clever. During analysis you move through the input with a fixed hop size, say 512 samples. During synthesis you place the frames further apart, say 1024 samples. The content of each frame stays identical, but you are reassembling them at twice the spacing. The output is twice as long: the signal plays at half speed.

Pitch shifting is built on top of this. You time-stretch first, then resample the result. Stretch to double length and resample back to the original duration, and the pitch rises by one octave without changing the duration.

That sounds clean on paper. In practice it falls apart immediately if you just move the frames and do nothing else to the phases.

The Phase Problem

Each FFT bin represents a range of frequencies, not a single exact frequency. A 2048-point FFT on a 44100 Hz signal has bins spaced roughly 21.5 Hz apart. A sinusoid sitting at 1000 Hz does not land cleanly in one bin. It lands somewhere between bins, and it leaks into adjacent ones.

When you stretch by changing the synthesis hop size, consecutive frames are placed further apart in time than they were analyzed. The phase at each bin in the output frame needs to match what that frequency would naturally produce at the new, later position. If you just paste in the raw analyzed phase, adjacent frames will have phases that are inconsistent with the timing gap between them. The result is phase cancellation between overlapping frames, heard as a characteristic smearing and metallic wash called "phasiness."

The fix requires computing the instantaneous frequency of each bin, which is the actual frequency of the sinusoidal component that bin is tracking, not just the nominal bin center frequency. You get this by looking at the phase difference between the same bin in consecutive analysis frames. That phase delta, unwrapped and divided by the time elapsed, gives you the true frequency. When you place the synthesis frame at its new position, you propagate the phase forward by exactly the amount this true frequency would have accumulated over the longer synthesis hop.

This phase propagation step is what separates a phase vocoder from a naive overlap-add time stretcher. Without it, audio degrades almost immediately.

Vertical Coherence and Why Drums Still Sound Wrong

The phase propagation described above handles what researchers call horizontal coherence: the relationship between the same bin across consecutive frames. For sustained tones like strings or pads, this works remarkably well.

But there is a second problem: vertical coherence, the relationship between adjacent bins within a single frame. A real sound is not made of independent sinusoids. A piano note at 220 Hz produces energy at 220, 440, 660, 880 Hz and above, all phase-locked to the same wavecycle. When you independently rotate the phase of each bin to satisfy horizontal coherence, you break the relative phase relationships between them. Harmonics that were locked together get scrambled. The result is the characteristic loss of "presence" or "solidity" that makes stretched material sound synthetic.

Laroche and Dolson addressed this in 1999 by grouping bins that belong to the same spectral peak and propagating all of them using the phase of the dominant bin in the group. This preserves the vertical structure within each harmonic cluster and dramatically improved quality for pitched material.

Transients are a different failure mode entirely. A drum hit is not a collection of steady sinusoids. It is a near-instantaneous energy burst that spreads across the entire spectrum simultaneously, with all bins sharing the same onset moment. Phase vocoder analysis assigns each bin its own instantaneous frequency and propagates independently, breaking the synchrony that defines the transient. The sharp attack gets smeared into a pre-echo or a ghost tail. This is why time-stretching a snare at 50% tempo has always sounded wrong unless the algorithm specifically handles transients.

The standard modern approach detects transients using onset detection on the magnitude spectrum, and switches to a simpler overlap-add without phase modification at those points, preserving the original phase relationships across the attack. zplane's Elastique, widely licensed across Ableton, Reaper, Bitwig and others, uses this kind of hybrid strategy. Melodyne uses a different approach entirely: it separates notes via pitch detection first, then manipulates each note's pitch and duration individually, sidestepping much of the phase problem by operating at the note level rather than the frame level.

What You Can Hear Directly

If you want to audition these failure modes deliberately: load a 120 BPM drum loop, stretch it to 60 BPM, and listen for pre-echo on the snare attack. That ghost hit before the transient is the phase smear from inadequate transient detection. Switch algorithms (most DAWs expose Elastique Pro, Standard, and a basic mode) and you will hear the difference directly. The Pro mode costs more CPU because it is doing per-bin peak grouping and transient detection on every frame.

On a sustained string pad, the failure mode looks different: stretch to 50% and listen for a metallic shimmer on sustained notes. That is vertical incoherence, the out-of-phase harmonics beating against each other in the overlap-add reconstruction.

The Algorithm Is Older Than You Think

James Flanagan at Bell Labs described the original phase vocoder concept in 1966, intended for voice compression over telephone lines, not music production. The analysis-synthesis framework sat in academic DSP literature for two decades before DAW developers found it useful for audio editing. The core STFT analysis and overlap-add synthesis that runs inside Ableton Live right now is architecturally identical to what Flanagan described for bandwidth compression.

What changed over six decades is the sophistication of the phase propagation and the handling of the two coherence problems. The underlying math is the same. Every time you time-stretch, you are running a telephony algorithm from 1966 with sixty years of patches applied on top.